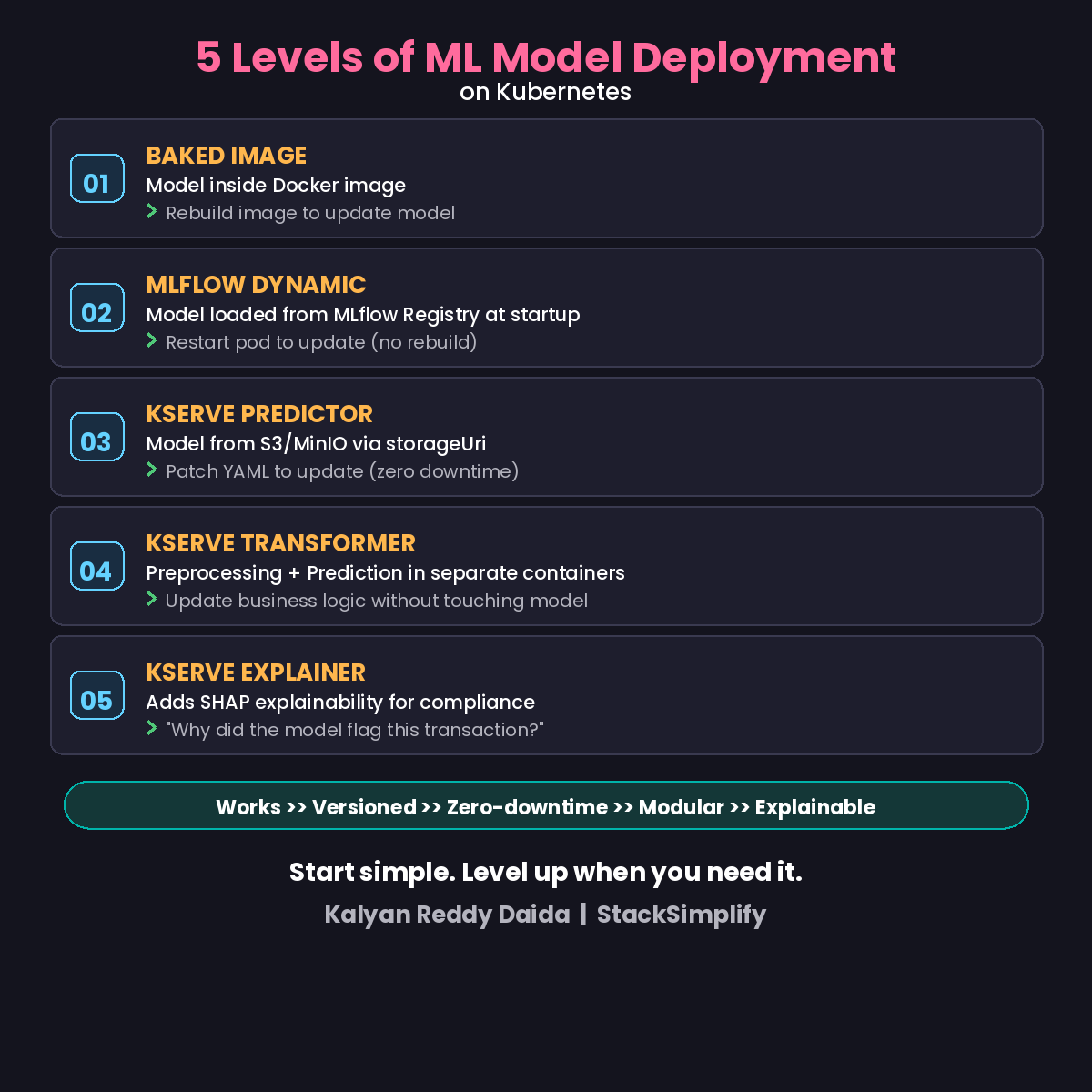

5 Levels of ML Model Deployment on Kubernetes

From baked Docker images to explainable AI. Each level adds production capabilities. Here is the progression every DevOps engineer should know.

You deploy containers to Kubernetes every day. But how do you deploy ML models?

There are 5 levels. Each adds production capabilities. Here’s the progression.

The 5 Levels

| Level | Pattern | DevOps Equivalent | When to Use |

|---|---|---|---|

| L1 | Baked Image | Static binary in container | Learning, simple models |

| L2 | MLflow Dynamic | Config from external store | Versioned, no rebuild |

| L3 | KServe Predictor | Deployment + HPA + Ingress | Scalable, zero downtime |

| L4 | KServe Transformer | Sidecar pattern | Modular, independent scaling |

| L5 | KServe Explainer | Audit logging | Compliance, GDPR |

Level 1: Baked Image

Model baked into the Docker image at build time. Simple: docker build, kubectl apply, done.

Downside: Rebuild the image for every model update. Every retrain means a new CI/CD run.

Level 2: MLflow Dynamic Loading

Model loaded from MLflow Registry at pod startup. Update the model? Restart the pod. No image rebuild needed.

This is a big step. Your deployment image stays the same. Only the model version changes.

Level 3: KServe InferenceService

KServe gives you a Kubernetes CRD that wraps Deployment + HPA + Ingress into one resource. Model loaded from S3/MinIO via storageUri.

Update? Patch the YAML. KServe handles rolling updates with zero downtime.

Level 4: KServe Transformer + Predictor

Adds a preprocessing container alongside the model. Transformer handles feature engineering and business logic. Predictor handles pure ML inference.

Independent lifecycles. Independent scaling. Model retrained? Only the Predictor redeploys.

Level 5: KServe Explainer

Adds SHAP explainability. “Why did the model flag this transaction?” Required for GDPR compliance, financial audits, and healthcare decisions.

L1: Works. L2: Versioned. L3: Scalable. L4: Modular. L5: Explainable.

Start with Level 1 to learn. Deploy Level 4+ in production. See also: Scale-to-Zero for cost optimization at any level.

This is Part 5 of the MLOps for DevOps Engineers series. For weekly updates, join the newsletter.