A/B Testing for ML Models: When Offline Metrics Lie

You retrained the model. Accuracy went up 2% on the test set. Revenue dropped 5%. Here is why you need A/B testing for ML models.

You retrained the model. Accuracy went up 2% on the test set. You deployed it. Revenue dropped 5%.

What happened? Offline metrics lie. A model that scores better on historical data can score worse on real users.

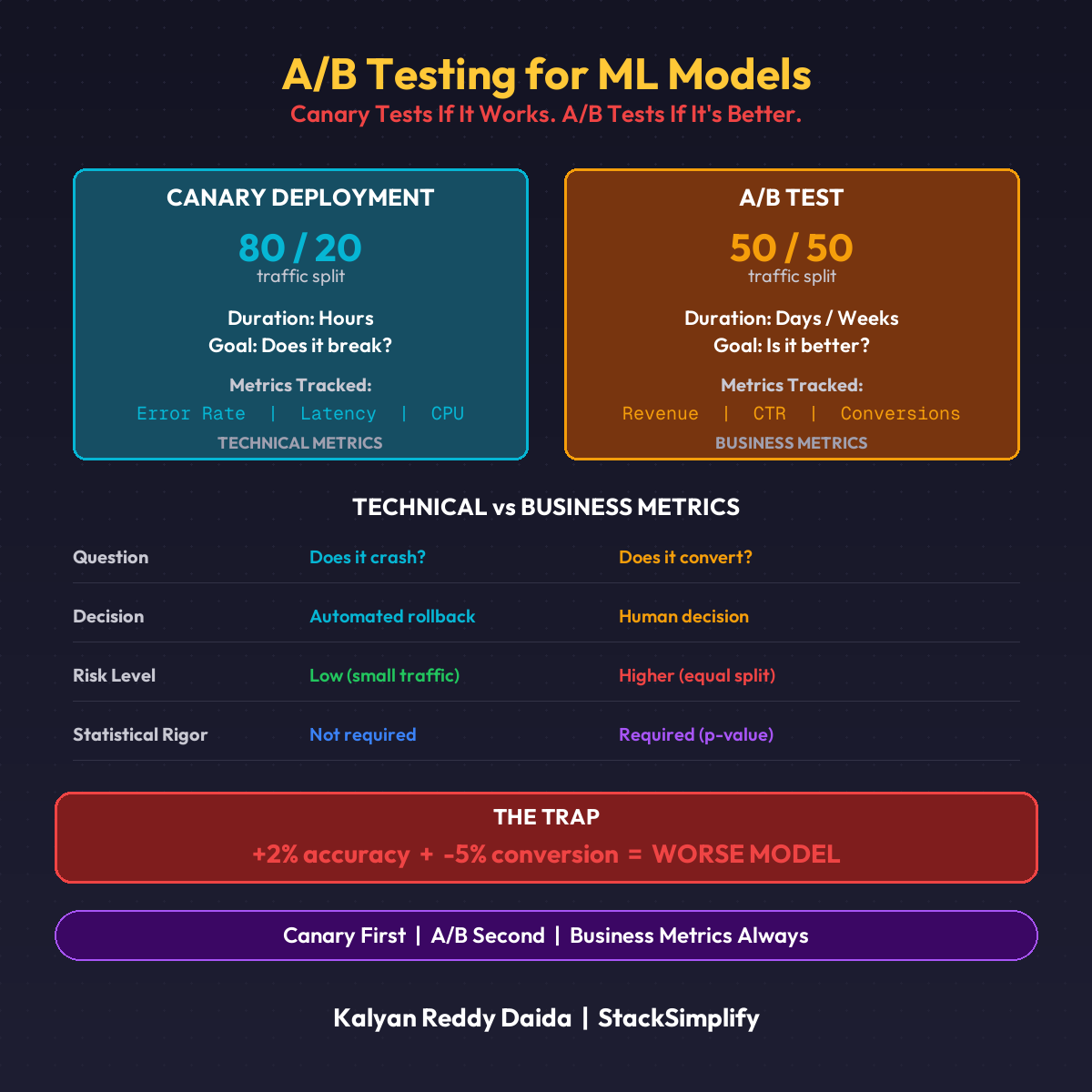

Canary vs A/B Testing

| Approach | Question It Answers | Traffic Split |

|---|---|---|

| Canary | “Does it break anything?” | 10-20% to new model |

| A/B Testing | “Does it actually improve outcomes?” | 50/50 to both models |

You need both. Canary first, then A/B.

How to Split Traffic

KServe + Istio makes this simple:

- Deploy both models behind the same endpoint

- Set traffic split (50/50 for A/B)

- Tag requests so you can trace which model served which prediction

- Log everything: predictions, latency, and downstream outcomes

The split happens at the infrastructure level. Your application code doesn’t change.

What to Measure

Technical metrics alone are not enough. You need business metrics.

| Type | Metrics |

|---|---|

| Technical | Accuracy, precision, recall, F1, latency |

| Business | Revenue per user, click-through rate, conversion rate, churn |

A model with 2% higher accuracy but 5% lower conversion rate is a worse model. Period.

Run the test long enough to reach statistical significance. Deciding too early is the number one A/B testing mistake.

When A/B Testing Is Overkill

| Scenario | Use A/B? |

|---|---|

| Model directly impacts revenue | Yes |

| Enough traffic for significance in days | Yes |

| Internal batch predictions | No. Compare offline metrics |

| Low-traffic endpoints | No. Won’t reach significance |

Start with canary. Graduate to A/B when the business impact justifies it.

This is Part 13 of the MLOps for DevOps Engineers series. For weekly updates, join the newsletter.