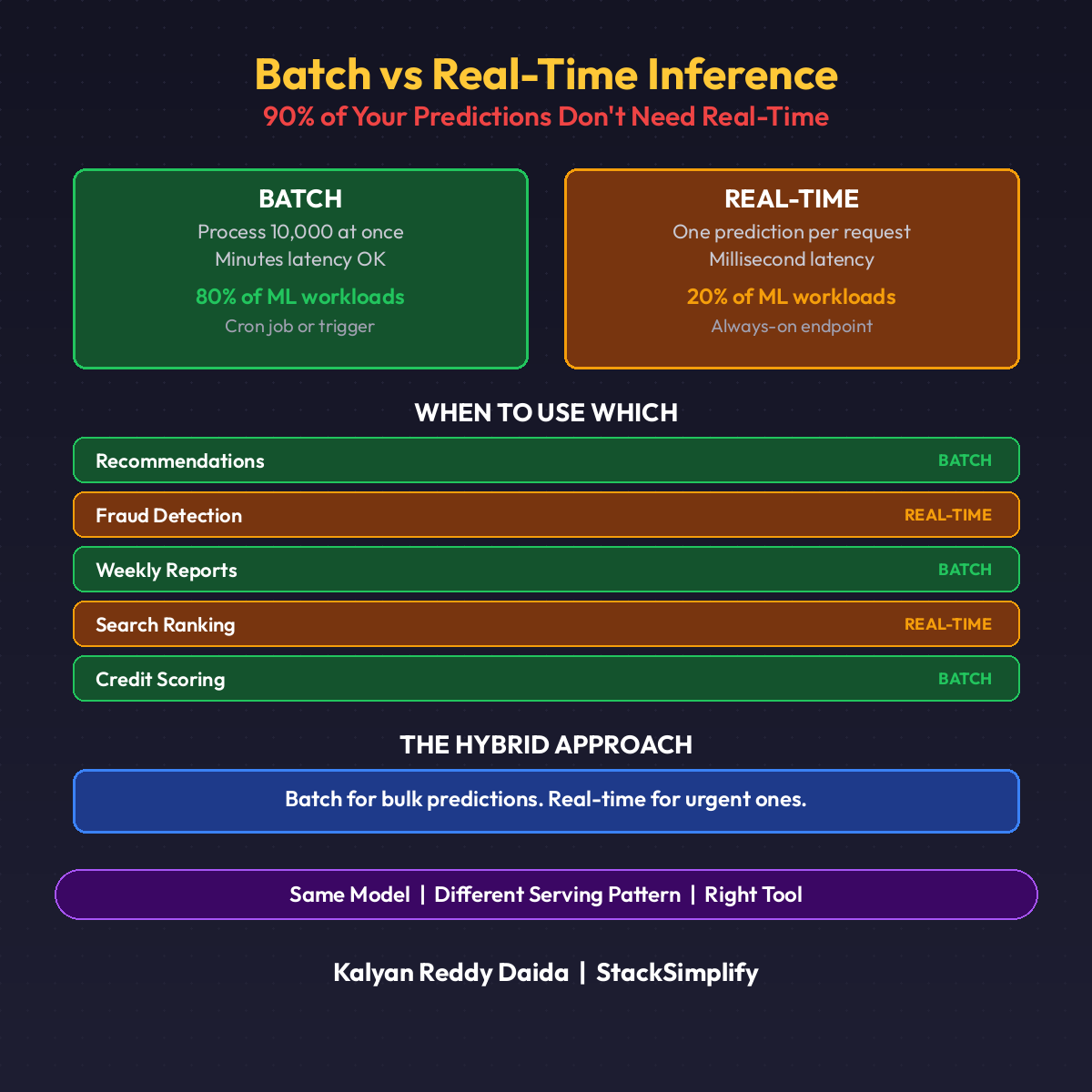

Batch vs Real-Time ML Inference: 90% of Predictions Can Be Batch

Your model runs in real-time. 90% of your predictions do not need to. Here is the decision framework and the cost math showing 99.5% savings.

Your model runs in real-time. 90% of your predictions do not need to.

That is the most expensive assumption in ML infrastructure. A recommendation engine that refreshes daily does not need always-on pods. A credit risk score computed once at application time does not need a replica running at 3 AM.

Most teams default to real-time because that is how their first model shipped. Every model after inherits the same pattern. And the same bill.

When To Use Real-Time

Real-time inference runs per request. Model loaded in memory. Response in milliseconds.

| Use Case | Why Real-Time |

|---|---|

| Transaction fraud detection | Decision must happen before the payment clears |

| Search ranking | Input unique to each query |

| Dynamic pricing | Tied to real-time inventory and demand |

| Content moderation | Block before publish |

Latency directly impacts revenue or safety. Predictions cannot be precomputed.

When To Use Batch

Batch inference runs on a schedule. Process all inputs at once. Store results. Serve from cache.

| Use Case | Why Batch |

|---|---|

| Daily churn risk scores | Sales team queries next morning |

| Weekly credit scoring | Inputs arrive via nightly ETL |

| Product recommendations (6h refresh) | Freshness measured in hours |

| Batch fraud risk reports | Consumed by analysts, not users |

Freshness in hours. Cost matters more than latency.

The Cost Math

Real scenario: score 50,000 customers daily for churn risk.

| Approach | Setup | Monthly Cost |

|---|---|---|

| Real-time | 2 pods x 24/7 x $0.10/hr | ~$146 |

| Batch | 1 K8s Job x 15 min/day x $0.10/hr | ~$0.75 |

Savings: 99.5%. The predictions are identical. Freshness is daily instead of instant. Two orders of magnitude cost difference.

Scale to 10 models: $17,430 annual savings from one architectural decision.

The Decision Framework

Walk every model through these five questions:

1. Freshness

How stale can predictions be before losing value? Seconds = real-time. Hours or days = batch.

2. Input Source

User click, search, transaction = real-time. Nightly ETL or scheduled dump = batch.

3. Consumption Pattern

Inline in a user flow (must wait) = real-time. Looked up from a table (precomputed) = batch.

4. Cost Sensitivity

Cost secondary to latency = real-time. Cost is the constraint = batch.

5. Failure Mode

Revenue lost if prediction delayed 30s = real-time. Nobody notices = batch.

Most teams discover 2-3 models need real-time. The rest do not.

A Minimal Batch Inference Script

| |

No pods running 24/7. No cold start. No autoscaler tuning. Load model, score everything, write results, done. Schedule with a Kubernetes CronJob or Airflow DAG.

Three Batch Architectures

| Architecture | Best For | Scale |

|---|---|---|

| Kubernetes CronJob | Simple, single-model scoring | Under 1M rows |

| Spark on Kubernetes | Large-scale distributed scoring | 1M to 100M+ rows |

| SageMaker Batch Transform | AWS-native teams | Any size, fully managed |

All three share one property: compute runs only during scoring, then stops. No idle pods. No wasted spend.

Three Hybrid Patterns

Most production systems use both. Same ML platform, two serving patterns.

Pattern 1: Batch Precompute + Real-Time Lookup

Nightly batch scores all customers. Results in Redis or Feast feature store. Real-time API reads the precomputed score. Latency: under 5ms (just a cache hit, no inference).

Pattern 2: Batch Default + Real-Time Override

Batch covers 95% of requests. Real-time handles new or unseen inputs that have no precomputed score. Cache miss triggers live inference.

Pattern 3: Real-Time Fast + Batch Deep

Lightweight model real-time (simple features, 20ms). Heavy ensemble runs batch (deep features, high accuracy). Instant decision now, refinement later. Used by fraud detection for instant block plus nightly review.

The DevOps Parallel

You already understand this trade-off. Sync vs async processing.

| Sync (Real-Time) | Async (Batch) |

|---|---|

| HTTP request blocks the caller | Kafka message decouples producer |

| Sub-second response | Bulk processing, fault-tolerant |

| Expensive at scale | Cheap at scale |

Nobody makes a synchronous HTTP call to process 10M log entries. Nobody sends a Kafka message when they need a sub-second response. Batch vs real-time inference is the same decision.

Quick Reference

| Tool | Purpose |

|---|---|

| KServe | Real-time inference with scale-to-zero |

| Kubernetes CronJob | Simple scheduled batch scoring |

| Feast | Feature store bridging batch and real-time |

| Airflow | Orchestrate complex batch DAGs |

| SageMaker Batch Transform | Managed batch on AWS |

(Pair this with Part 8: Scale-to-Zero and Part 18: ML Cost Optimization for the full cost-cutting stack.)

This is Part 20 of the MLOps for DevOps Engineers series. Hands-on MLOps courses are available at stacksimplify.com/courses. For weekly updates, join the newsletter.