Canary Deployments for ML Models with KServe and Istio

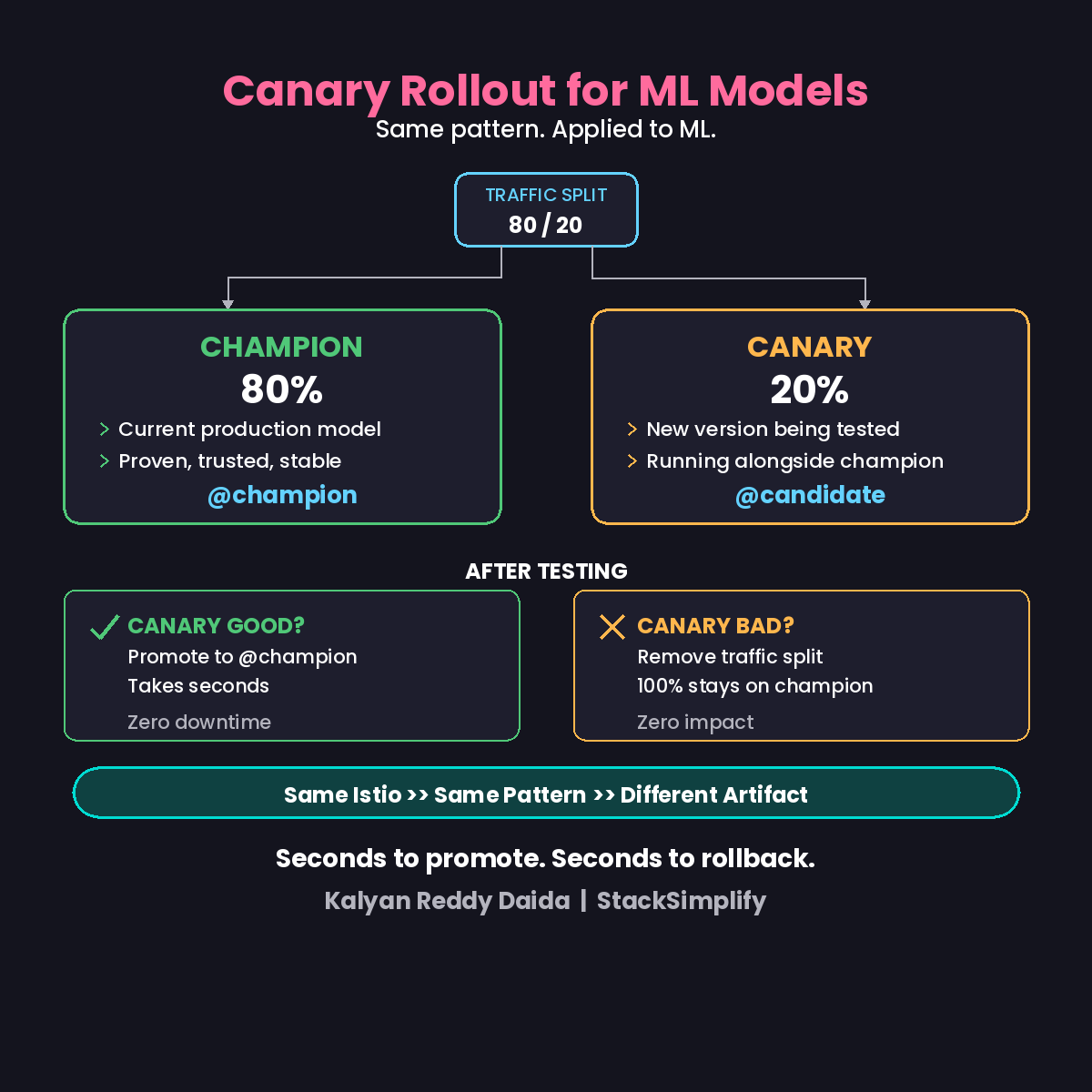

You do canary deployments for APIs. Why not for ML models? Here is how KServe and Istio split traffic between champion and candidate models.

You do canary deployments for APIs every day. Why not for ML models?

New model ready. Looks good in testing. Deploy to production. Hope it works. It doesn’t. Rollback takes 5 minutes. Five minutes of garbage predictions. Damage done.

How It Works

| Role | Traffic | Description |

|---|---|---|

| Champion (80%) | Production traffic | Current model, proven, stable |

| Canary (20%) | Test traffic | New version, running alongside |

Both run simultaneously. Same endpoint. Istio handles the traffic split.

The 4-Step Process

Step 1: Deploy your champion model.

Step 2: Add canaryTrafficPercent: 20 to the KServe InferenceService.

Step 3: KServe + Istio routes traffic automatically. 80% to champion pods, 20% to canary pods.

Step 4: Evaluate and decide.

- Canary good? Promote to

@champion. Takes seconds. - Canary bad? Remove the traffic split. Zero impact.

The same pattern you use for microservices. The same Istio you already know. Applied to ML models.

Canary vs Full Cutover

| Approach | Risk | Rollback Time |

|---|---|---|

| Full cutover | 100% traffic hits new model | Minutes (redeploy) |

| Canary | Only 20% traffic at risk | Seconds (remove split) |

What to Monitor During Canary

While the canary is running, watch these metrics across both models:

- Prediction latency (P50, P95, P99). New model significantly slower? Problem.

- Error rate. Any 5xx responses from the canary? Kill it immediately.

- Prediction distribution. Is the canary predicting significantly differently from the champion? Could mean a bug in preprocessing.

- Business metrics. If you can track downstream outcomes (conversion, fraud caught), compare them.

The canary period should last long enough to see real traffic patterns. For most models, 24-48 hours covers enough variety in user behavior.

The DevOps Parallel

You already know this pattern.

Istio traffic splitting works the same way for APIs and ML models. KServe adds ML-specific features: model format support, GPU scheduling, and scale-to-zero for the canary when testing is done.

Same infrastructure. Same Istio. Same observability stack. Applied to ML models.

This is Part 6 of the MLOps for DevOps Engineers series. Next: the two-container pattern for separating preprocessing from inference.

For weekly updates, join the newsletter.