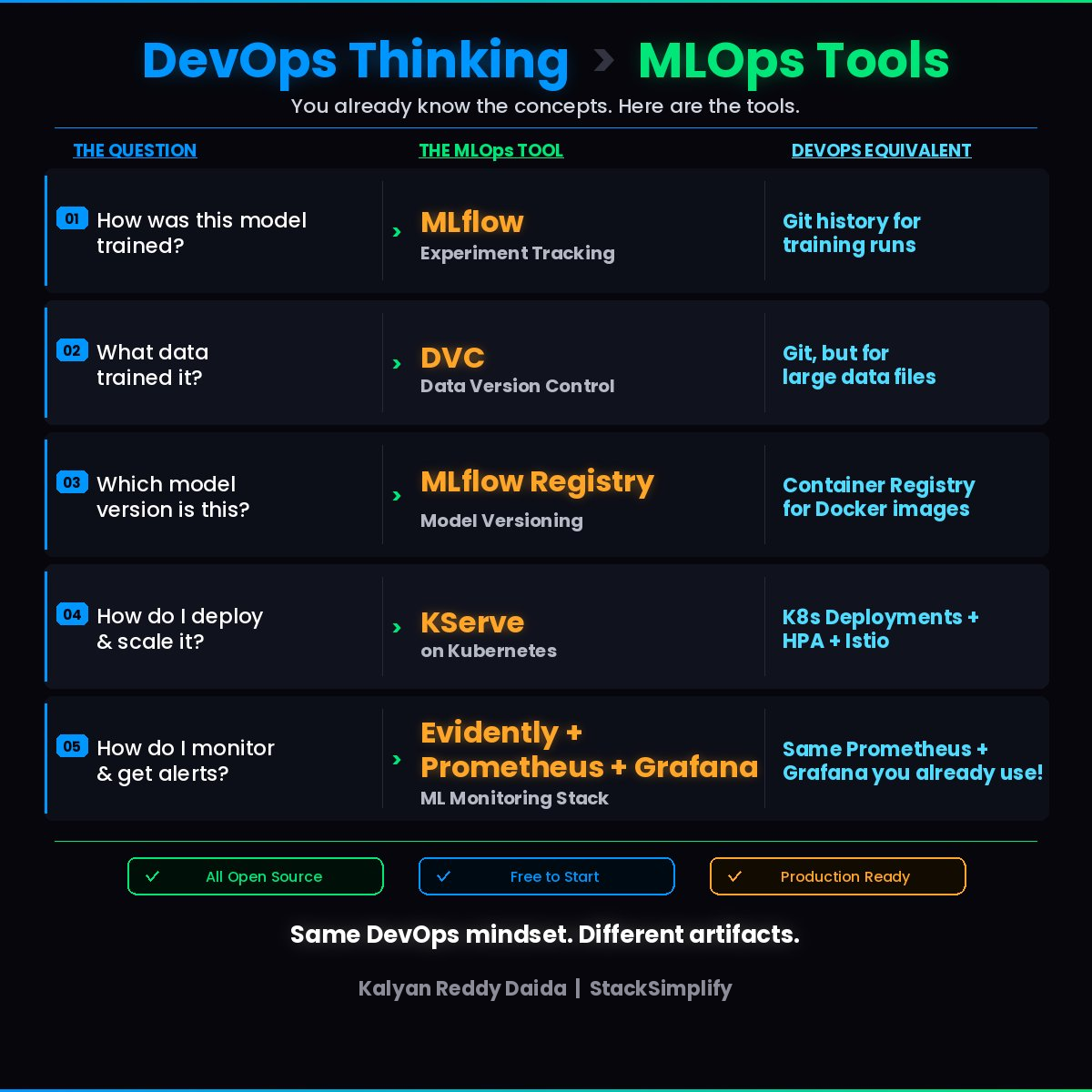

DevOps Thinking Applied to MLOps: 5 Essential Tools

You already know 80% of MLOps. Here are 5 open-source tools that map directly to your existing DevOps skills.

If you’re a DevOps engineer and a data scientist has ever handed you a model.pkl and said “deploy this”, you know the feeling.

Where did this come from? What data trained it? Which version is this? How do I scale it?

Here’s what I’ve learned after months building MLOps pipelines: these aren’t new problems. We’ve already solved them in DevOps. The tools are different, but the thinking is identical.

The Mental Model: Same Problems, Different Artifacts

Every MLOps challenge maps directly to a DevOps pattern you already understand:

| DevOps Problem | MLOps Equivalent | Tool |

|---|---|---|

| Git history for builds | Experiment tracking for training runs | MLflow |

| Git for config files | Version control for large datasets | DVC |

| Container Registry for images | Model registry for model versions | MLflow Model Registry |

| K8s + HPA + Istio for APIs | Model serving with autoscaling and canary | KServe |

| Prometheus + Grafana for services | ML monitoring for data drift | Evidently + Prometheus + Grafana |

Let’s break each one down.

1. Experiment Tracking with MLflow

When you deploy an application, you have Git history showing every change. In ML, the equivalent is experiment tracking: recording every training run, every hyperparameter, every metric.

MLflow does this automatically. Every time a data scientist trains a model, MLflow logs:

- The parameters used

- The dataset reference

- The accuracy metrics

- The model artifact itself

Instead of guessing which model_v3_final_FINAL.pkl is actually in production, you have a clean timeline of every experiment.

Think of it as

git logfor training runs. (See Part 2: MLflow deep dive for the full lifecycle.)

2. Data Version Control with DVC

Code changes live in Git. But training data is too large for Git.

DVC solves this by tracking large files (datasets, model binaries) with Git-like commands while storing the actual data in S3, GCS, or Azure Blob.

Your workflow stays familiar:

| |

The data gets versioned. The metadata lives in Git. No more “which CSV was used to train the model in production?”

3. Model Registry with MLflow

Container registries store Docker images with tags. MLflow Model Registry does the same for ML models:

- Store trained model artifacts

- Version every model (v1, v2, v3…)

- Tag as

stagingorproduction - Track who promoted what and when

When a model is ready, you promote it from staging to production in the registry. Your deployment pipeline picks it up from there, just like pulling a Docker image by tag.

4. Model Serving with KServe on Kubernetes

This is where it gets really familiar.

KServe runs on Kubernetes and serves ML models as HTTP endpoints with:

- Autoscaling (including scale-to-zero for cost savings)

- Canary rollouts and traffic splitting

- GPU scheduling for heavy models

- Support for TensorFlow, PyTorch, sklearn and more

If you’ve set up K8s Deployments with HPA and Istio for microservices, you already understand the architecture. KServe just adds ML-specific features on top.

5. ML Monitoring with Evidently + Prometheus + Grafana

Your existing Prometheus + Grafana stack monitors service health: latency, error rates, CPU.

For ML, you need one more layer: model health.

- Is the input data shifting?

- Is prediction accuracy degrading?

- Are certain categories suddenly getting wrong predictions?

Evidently calculates ML-specific metrics like data drift and prediction quality, then exposes them as Prometheus metrics. Your existing Grafana dashboards and alerting rules work exactly the same way.

You’re just tracking model health instead of service health.

The 80/20 Rule of MLOps

Here’s the thing most people don’t tell you:

MLOps is 80% infrastructure and 20% understanding what the data scientist needs.

That 80%? You already own it. Kubernetes, CI/CD, monitoring, version control, infrastructure as code. The tools change, but the patterns are the same.

If you’re a DevOps engineer wondering whether MLOps is “too much ML” for you, it’s not.

Same DevOps mindset. Different artifacts.

All Tools Mentioned (Open Source, Free to Start)

| Tool | What It Does | Link |

|---|---|---|

| MLflow | Experiment tracking + Model registry | mlflow.org |

| DVC | Data version control | dvc.org |

| KServe | Model serving on Kubernetes | kserve.github.io |

| Evidently | ML monitoring & data drift | evidentlyai.com |

| Prometheus + Grafana | Metrics & dashboards | prometheus.io |

No vendor lock-in. No credit card required.

Interested in learning MLOps hands-on? I’m building new courses on MLOps with AWS SageMaker and MLflow coming in 2026.

Meanwhile, the Ultimate DevOps Real-World Project on AWS covers the Kubernetes, Helm, and monitoring foundation you’ll need.

For more practical DevOps tips delivered weekly, join the newsletter.