ML Cost Optimization: One YAML Field Cut Our Bill by 80%

We changed minReplicas from 1 to 0. Infrastructure cost dropped 80%. Here is how KPA, scale-to-zero, and panic mode work for ML inference.

We changed one YAML field from 1 to 0. Infrastructure cost dropped 80%.

The field: minReplicas.

When set to 1, your ML inference pod runs 24/7. Even at 3 AM when nobody is making predictions. That’s $50-150 per month per model, running idle.

When set to 0, the pod scales to zero when idle. Traffic arrives, the pod spins up. Traffic stops, the pod disappears. You pay only for what you use.

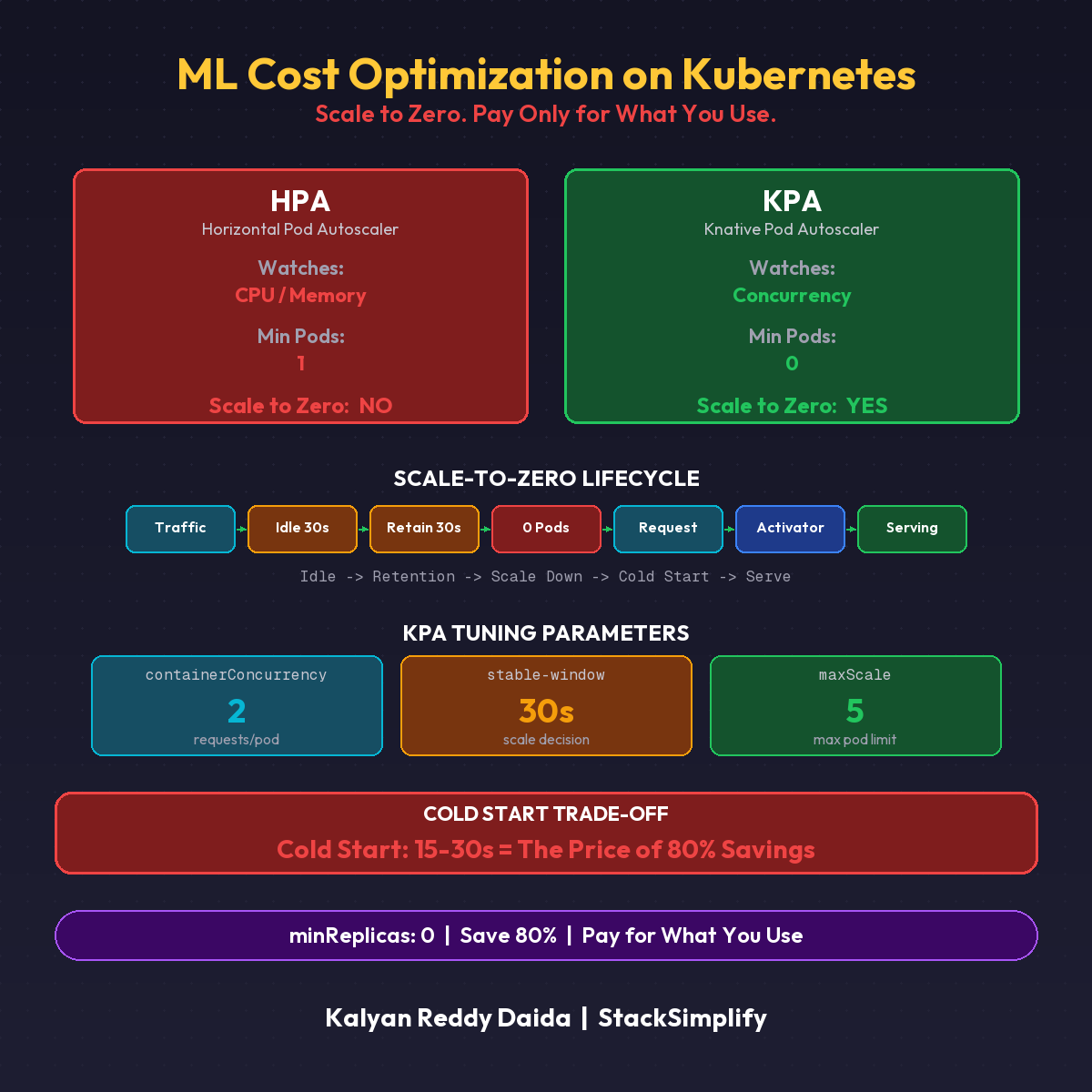

KPA vs HPA

| Autoscaler | Watches | Scales to Zero? |

|---|---|---|

| Kubernetes HPA | CPU and memory | No. Minimum 1 pod always |

| Knative KPA | Concurrency (requests per pod) | Yes. Zero pods when idle |

Same cluster. Same pods. Different autoscaler. Very different bill.

How KPA Tunes

Three parameters that matter most:

| Parameter | What It Does | Our Setting |

|---|---|---|

scaleTarget | Requests per pod before scaling out | 2 (default 10) |

minReplicas | Minimum pods | 0 (scale-to-zero) |

window | Observation period | 30s |

Panic Mode

Traffic spikes 2x in 6 seconds? KPA switches to panic mode. Instant scale-up. No waiting for the observation window. Pods appear immediately.

Once traffic stabilizes, KPA switches back to stable mode.

The Trade-off: Cold Start

| Scenario | First Request Latency |

|---|---|

| Pod already running | Instant (milliseconds) |

| Scale-from-zero | 15-30 seconds (model loading) |

When Scale-to-Zero Is Wrong

| Use Case | minReplicas |

|---|---|

| Real-time fraud detection | 1 (never scale to zero, cold start = unblocked fraud) |

| Internal batch scoring | 0 (save 23 hours of compute) |

| Dev/staging | 0 (nobody watching at midnight) |

| Low-traffic models | 0 (best cost-performance ratio) |

Match the scaling strategy to the business requirement. (See also Part 8: Scale-to-Zero fundamentals.)

This is Part 18 of the MLOps for DevOps Engineers series. For weekly updates, join the newsletter.