ML Model Monitoring: Your Grafana Dashboard Is Lying to You

Your model uses 10% CPU, zero errors, healthy pod status. And still returns garbage predictions. Here are the 3 alerts you need today.

Your ML model was 95% accurate when you deployed it. That was 6 months ago. Nobody has checked since.

A model can show 10% CPU, zero errors, healthy pod status. And still return garbage predictions. Your Grafana dashboard shows all green. Your customers see wrong results.

Why This Happens

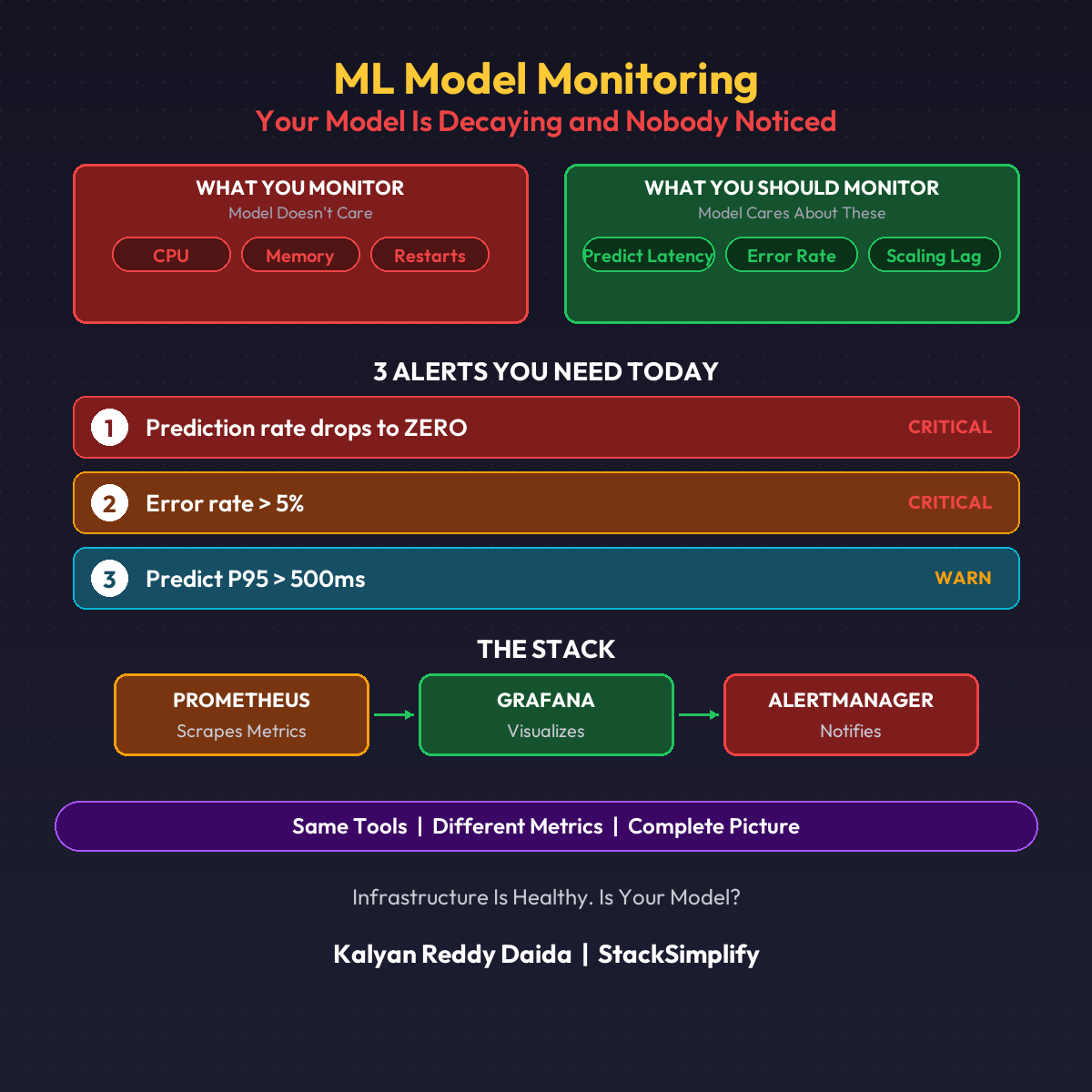

Your monitoring tracks CPU, memory, and pod restarts. Your model cares about none of that.

Models degrade because the world changes:

- Customer behavior shifts (seasonal, economic)

- New data patterns the model never saw

- Input distributions drift from training data

- Feature relationships change over time

Infrastructure monitoring catches container failures. It completely misses model failures.

The 3 Alerts You Need Today

| Alert | Condition | Severity |

|---|---|---|

| Prediction rate drops to ZERO | No predictions in 5 minutes | CRITICAL |

| Error rate > 5% | More than 1 in 20 requests failing | CRITICAL |

| Predict P95 > 500ms | Inference slowing down | WARNING |

These three alone would have caught most ML production incidents.

The DevOps Parallel

For applications: Prometheus scrapes metrics. Grafana visualizes. AlertManager notifies.

For ML models: Same Prometheus. Same Grafana. Same AlertManager. Different metrics: predict latency, error rate, scaling lag.

The stack doesn’t change. The metrics do.

What This Doesn’t Cover

These are operational metrics (is the model running?). Statistical monitoring (is the model still accurate?) is a different layer: prediction distribution shifts, feature drift, accuracy decay.

Step 1 is operational monitoring (this post). Step 2 is statistical monitoring (next post).

Most teams don’t even have Step 1.

This is Part 10 of the MLOps for DevOps Engineers series. For weekly updates, join the newsletter.