ML Security on Kubernetes: 4 Layers Protecting Your Models

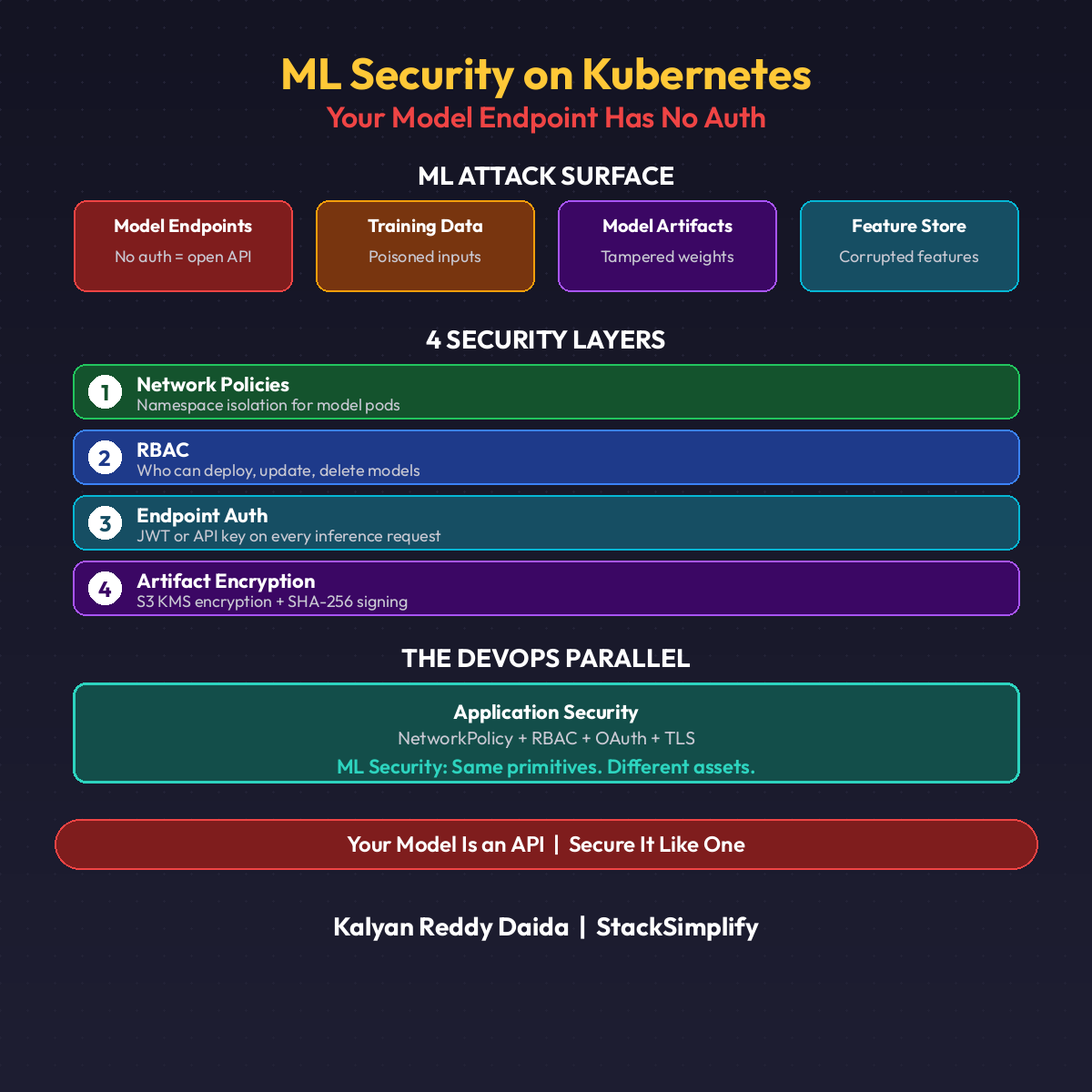

Your model endpoint has no auth. Anyone with the URL gets predictions. That is the default on most KServe deployments. Here are the 4 layers that fix it.

Your model endpoint has no auth. Anyone with the URL gets predictions.

That is not a hypothetical. It is the default on most KServe deployments. Deploy a model, get an endpoint, and it is wide open. No token. No identity check. No network restriction.

ML systems have a unique attack surface: training data, model artifacts, feature stores, and inference endpoints. Each one is a target.

The ML Attack Surface

| Asset | Default Risk |

|---|---|

| Model endpoints | Open, returning predictions to anyone |

| Training data | S3 buckets with broad IAM access |

| Model artifacts | Serialized files that can be swapped or poisoned |

| Feature stores | Real-time pipelines with PII and business logic |

Traditional DevOps secures code. ML also has to secure data and models.

Layer 1: NetworkPolicy

Lock down namespace communication. Your model serving namespace should not accept traffic from every pod in the cluster.

| |

One YAML. Blast radius shrinks immediately. Without this, any compromised pod in any namespace can bypass the gateway and call your model directly.

Layer 2: RBAC for Model Serving

A dedicated ServiceAccount for inference pods. Roles scoped to exactly what the model needs. No cluster-wide permissions.

| |

Key decisions:

- Namespace-scoped Role, not ClusterRole

- Secret access limited to one specific secret by name

- No

create,update, ordeleteverbs

Your inference pod reads models and its own credentials. Nothing more.

Layer 3: Auth on the Inference Endpoint

This is the layer most teams skip entirely. They configure network policies and RBAC, then leave the actual prediction endpoint wide open.

Istio AuthorizationPolicy + JWT validation:

| |

Pair with RequestAuthentication pointing at your identity provider’s JWKS URI. No token, no prediction. Expired tokens rejected. Missing tokens rejected.

Most ML incidents traced to “how did they get to our endpoint?” end here. Fix Layer 3 first if you fix nothing else.

Layer 4: Model Artifact Encryption and Signing

If someone can write to your model bucket, they can replace your model with a poisoned version. You will not get errors. You will get wrong predictions, served confidently.

Encrypted storage via S3 bucket policy:

| |

Signed artifacts via SHA-256 or Sigstore cosign:

| |

Encrypt at rest. Verify before serving. Two steps that catch silent attacks.

The DevOps Parallel

You already do all of this for application services. ML uses the exact same Kubernetes primitives.

| DevOps | ML |

|---|---|

| NetworkPolicy for microservice isolation | NetworkPolicy for inference namespace |

| RBAC for pod permissions | RBAC for model-serving ServiceAccount |

| Auth middleware on API endpoints | Istio AuthZ on inference endpoints |

| Encrypted secrets in Vault | KMS-encrypted model artifacts |

Same primitives. Different assets.

Three ML-Specific Threats That Do Not Exist in DevOps

Threat 1: Model Extraction

Attacker queries your endpoint thousands of times and reconstructs your model from responses. They never touch S3.

- Mitigation: rate limiting, return labels (not raw probabilities), Layer 3 auth, monitor query patterns.

Threat 2: Adversarial Inputs

Crafted inputs that look normal to humans but fool the model. Transaction data modified to bypass fraud detection.

- Mitigation: input validation, out-of-distribution monitoring, ensemble models, confidence thresholds that reject low-score predictions.

Threat 3: Data Poisoning

Attacker injects bad data into the training pipeline. Model learns wrong patterns. Training completes successfully.

- Mitigation: data validation gates before training, DVC for data versioning, anomaly detection on training data, compare model behavior before/after retraining.

Start With Layer 3

You do not need all four layers on day one. Start with Layer 3 (endpoint auth). It is the most impactful and the most commonly skipped.

Then Layer 1 (NetworkPolicy). Then Layer 2 (RBAC). Layer 4 matters most once your model artifacts hold real business value.

Defense in depth. (See Part 16: ML Governance for the promotion gate pattern that pairs with this security stack.)

Quick Reference

| Layer | Primitive |

|---|---|

| 1. Network | Kubernetes NetworkPolicy |

| 2. RBAC | ServiceAccount + Role + RoleBinding |

| 3. Endpoint Auth | Istio AuthorizationPolicy + RequestAuthentication |

| 4. Artifact | S3 SSE-KMS + SHA-256 or cosign |

This is Part 22 of the MLOps for DevOps Engineers series. Hands-on Kubernetes security patterns are covered in the courses at stacksimplify.com. For weekly updates, join the newsletter.