Multi-Model Serving on Kubernetes: 50 Models, One Cluster

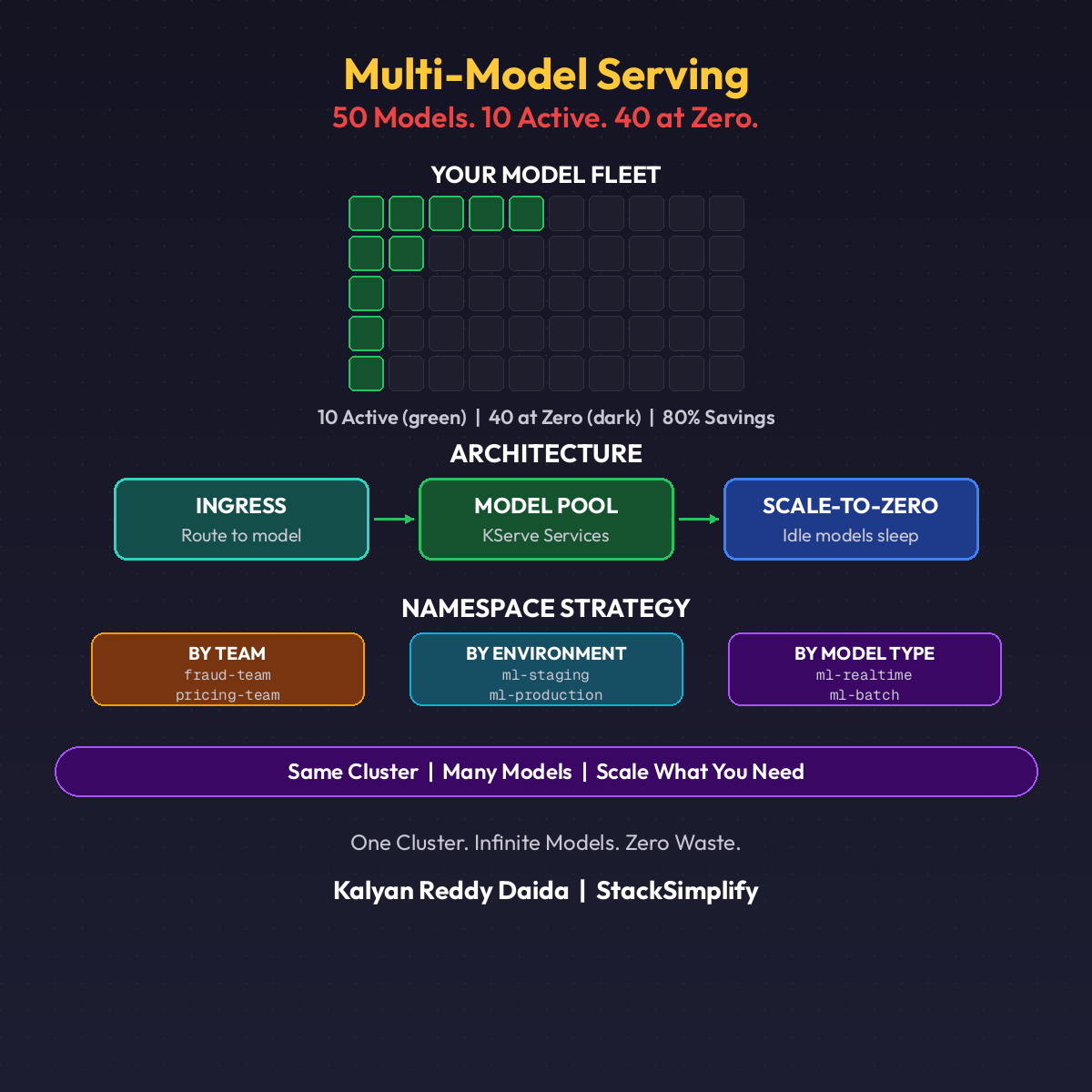

50 models. 10 active. 40 at zero. One cluster. Here is how mature ML platforms run dozens of models on shared infrastructure with 80% cost savings.

50 models. 10 active. 40 at zero. One cluster.

That is the reality of a mature ML platform. Not one model per team. Not one namespace per endpoint. Dozens of models sharing infrastructure, scaling independently, and costing almost nothing when idle.

Most teams never get here. They get stuck at the single-model trap.

The Single-Model Trap

Team A deploys their fraud model. Gets its own namespace, its own Istio gateway, its own monitoring stack. Works great.

Team B deploys a churn predictor. Same setup. Another namespace, another gateway, another dashboard.

By model number 10:

- 10 namespaces

- 10 gateways

- 10 sets of credentials

- 10 monitoring stacks

- An infrastructure team that spends more time on plumbing than enabling data scientists

It does not scale.

Multi-Model Architecture

The pattern that works: a shared inference pool.

| Layer | Pattern |

|---|---|

| Cluster | One Kubernetes cluster, owned by ML Platform team |

| Namespaces | One per team (not per model) |

| InferenceServices | Many KServe InferenceService per namespace |

| Gateway | One shared Istio ingress, host-based routing |

| Monitoring | One Prometheus + Grafana stack, per-model metrics |

Team Fraud deploys 8 models. Team Recommendations deploys 12. Team Risk deploys 30. All in their own namespaces. All sharing compute.

Multi-Model KServe YAML

Two models in one team namespace, both with scale-to-zero:

| |

Same namespace. Independent scaling. Independent scale-to-zero. Each model gets a unique hostname automatically.

Scale-to-Zero at 50-Model Scale

This is where it gets interesting. 50 models deployed. Only 10 active at any moment. The other 40 are scaled to zero. No pods. No compute. No cost.

When a request hits a sleeping model, Knative’s Activator intercepts it, spins up the pod, loads the model, and serves the prediction. Cold start: 15 to 30 seconds. Then warm until traffic stops again.

The Cost Math

| Setup | Monthly | Annual | 3-Year |

|---|---|---|---|

| Always-on (50 models × $55/mo) | $2,750 | $33,000 | $99,000 |

| Scale-to-zero (~10 active, $12 avg) | $600 | $7,200 | $21,600 |

| Savings | ~$2,150/mo | $25,800/yr | $77,400 |

80% savings on a single cluster. From one architectural decision.

The catch: cold starts. For batch scoring and internal tools, fine. For customer-facing fraud detection, set minReplicas: 1 on those specific models. Sweet spot: 5-8 critical models always-on, everything else scale-to-zero.

(See Part 8: Scale-to-Zero and Part 18: ML Cost Optimization for the full cost stack.)

Namespace Strategy

Three patterns. Pick one and commit.

| Strategy | Pattern | Best For |

|---|---|---|

| By Team (recommended) | team-fraud, team-reco, team-risk | 3+ ML teams, self-service |

| By Environment | ml-dev, ml-staging, ml-prod | Small orgs, <5 models total |

| By Model Type | realtime-serving, batch-scoring | Mixed SLA workloads |

Hybrid: team-fraud-prod, team-fraud-staging. More namespaces, cleaner boundaries. Use labels to track team, environment, and cost-center regardless of strategy.

Model Routing with Istio

Every InferenceService gets a unique hostname. Istio routes by Host header. One gateway. One IP. Different backends.

Request Flow

- Client

POSTtofraud-v2.ml-serving.example.com - DNS resolves to the shared Istio IngressGateway IP

- Istio reads the

Hostheader VirtualServiceroutes to the correct KServe service- If pod is at zero, Activator intercepts and spins it up

- Prediction returned

Custom Path-Based Routing

| |

Unlimited models. Path-based or host-based. Your choice.

Monitoring 50 Models on One Cluster

Cluster-level dashboards lie. You need per-model metrics.

| Metric | Why |

|---|---|

| Request count | Is this model being used? |

| Latency p50/p95/p99 | Is it fast enough? |

| Error rate | Is it healthy? |

| Pod count | Scaled up or at zero? |

| Cold start frequency | How often does it wake up? |

| Model version | Which version is serving? |

Useful Prometheus Queries

| |

The third query is underrated. Stale models nobody calls still consume registry space and add complexity. Clean them up.

The DevOps Parallel

If you have run microservices at scale, you already know this pattern.

| Microservices | Multi-Model ML |

|---|---|

| Shared K8s cluster | Shared K8s cluster |

| Per-team namespaces | Per-team namespaces |

| Service mesh routing | Istio host-based routing |

| Independent scaling per service | Independent scaling per InferenceService |

| HPA based on CPU/memory | KPA based on concurrency |

Same architecture. Different workload. The migration from “one cluster per service” to “one cluster, many services” took DevOps a decade. ML is going through the same transition right now.

The Maturity Path

Single model

↓

Multiple models, isolated namespaces (single-model trap)

↓

Shared inference pool (multi-model architecture)

↓

Per-team namespaces, self-service (platform thinking)

↓

Scale-to-zero at scale (this post)

↓

Full self-service ML platform (next: MLOps Maturity Model)

(See Part 16: ML Governance for the model registry that makes this scale, and Part 22: ML Security for the RBAC + mTLS that keeps it safe.)

Quick Reference

| Tool | Role |

|---|---|

| KServe | Per-model InferenceService with KPA autoscaling |

| Knative Serving | Scale-to-zero + Activator for cold starts |

| Istio | Shared gateway + host/path routing |

| Prometheus | Per-model metrics scraping |

| Grafana | Multi-tenant dashboards (cluster → team → model) |

This is Part 23 of the MLOps for DevOps Engineers series. Hands-on Kubernetes and MLOps courses are available at stacksimplify.com/courses. For weekly updates, join the newsletter.