Scale-to-Zero for ML Models: Stop Paying for Idle Compute

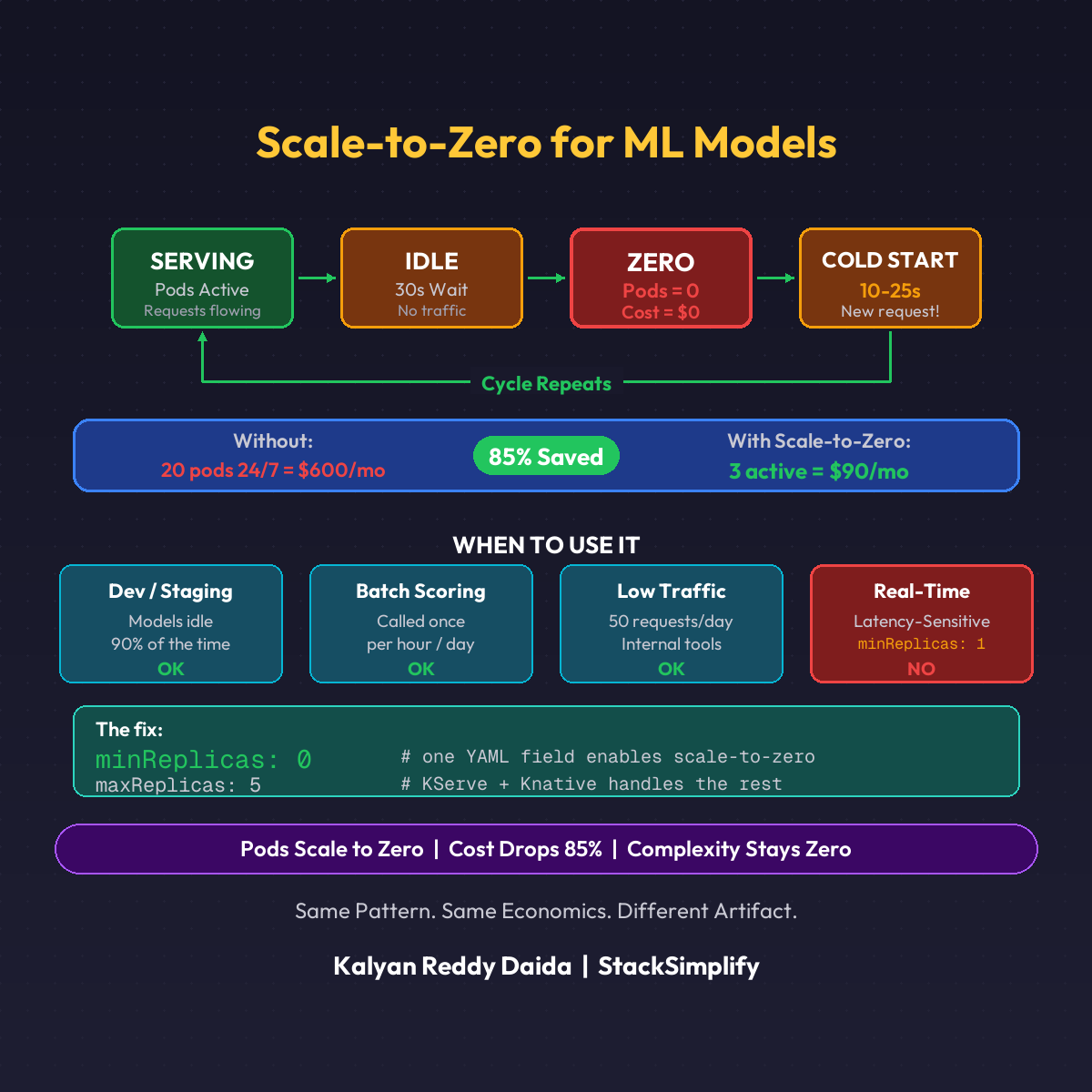

Your ML model runs 24/7. Inference requests come 2% of the time. KServe plus Knative scales to zero when idle. Here is how.

Your ML model runs 24/7. Inference requests come 2% of the time. You’re paying for 98% idle compute.

This is the most expensive mistake in ML deployment. And the fix takes one YAML field.

How It Works

KServe + Knative handles this natively.

- Your model is serving requests

- Traffic drops. 30 seconds of silence

- Knative scales pods to ZERO

- New request arrives

- Pod spins up in seconds. Request served.

Zero requests = zero pods = zero cost.

The Cold Start Trade-off

| Metric | Time |

|---|---|

| Pod startup | 5-15 seconds |

| Model loading | 2-10 seconds |

| First prediction total | 10-25 seconds |

For real-time APIs? Too slow. For batch scoring? Perfect. For dev/staging? No-brainer.

When to Use Scale-to-Zero

| Use Case | Scale-to-Zero? |

|---|---|

| Dev and staging environments | Yes. Idle 90% of the time |

| Batch scoring endpoints | Yes. Called once per hour/day |

| Low-traffic models (50 req/day) | Yes. Best cost-performance ratio |

| 20 models deployed, 3 active | Yes. Scale-to-zero the other 17 |

| Real-time, latency-sensitive | No. Keep minReplicas: 1 |

The DevOps Parallel

You already know this pattern.

- Lambda scales to zero when idle

- Knative does the same for containers

- KServe does the same for ML models

Same pattern. Same economics. Different artifact. (More cost strategies in Part 18: ML Cost Optimization.)

This is Part 8 of the MLOps for DevOps Engineers series. For weekly updates, join the newsletter.