SHAP Explainability: Why Your ML Model Flagged That Transaction

GDPR requires explanations for automated decisions. SHAP values tell you exactly why your model made each prediction. Here is how KServe serves explanations.

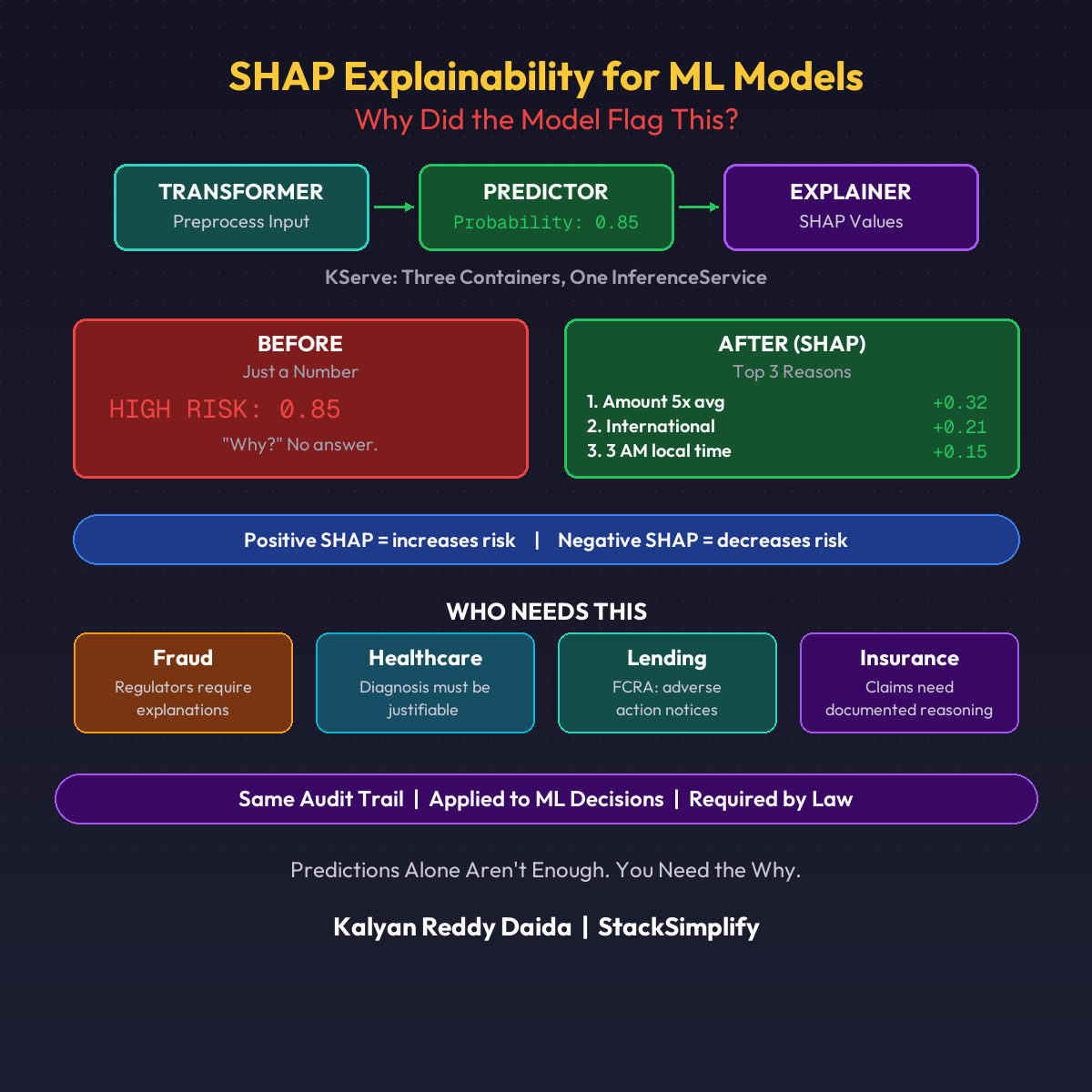

Your ML model flagged a customer’s transaction. They call support and ask: “Why?”

If you can’t answer, you might be breaking the law.

GDPR Article 22 gives users the right to an explanation for automated decisions. Financial regulators require it. Healthcare demands it.

The Explanation

Instead of just HIGH RISK: 0.85, you get:

| Feature | SHAP Value | Impact |

|---|---|---|

| Amount 5x higher than average | +0.32 | Increases risk |

| International from unusual country | +0.21 | Increases risk |

| Transaction at 3 AM local time | +0.15 | Increases risk |

Each number is a SHAP value. It tells you how much each feature pushed the prediction. Positive = increases risk. Negative = decreases risk.

How KServe Serves Explanations

KServe supports an Explainer container alongside your Transformer and Predictor. Three containers. One InferenceService. (See the Transformer-Predictor pattern for how the first two work.)

| Container | What It Does |

|---|---|

| Transformer | Preprocess raw input into model features |

| Predictor | Return probability (0.85) |

| Explainer | Return why it predicted 0.85 |

The DevOps Parallel

Application audit logging: “User X accessed resource Y. Action: denied. Reason: insufficient permissions.”

ML audit logging (SHAP): “Transaction X flagged. Prediction: fraud. Reason: amount 5x average, international, 3 AM.”

Same principle. Audit trail for automated decisions.

Who Needs This

| Industry | Requirement |

|---|---|

| Fraud detection | Regulators require explanations |

| Healthcare | Diagnosis must be justifiable |

| Lending | FCRA requires adverse action notices |

| Insurance | Claims need documented reasoning |

If your ML model makes decisions that affect people, you need explainability.

This is Part 9 of the MLOps for DevOps Engineers series. For weekly updates, join the newsletter.