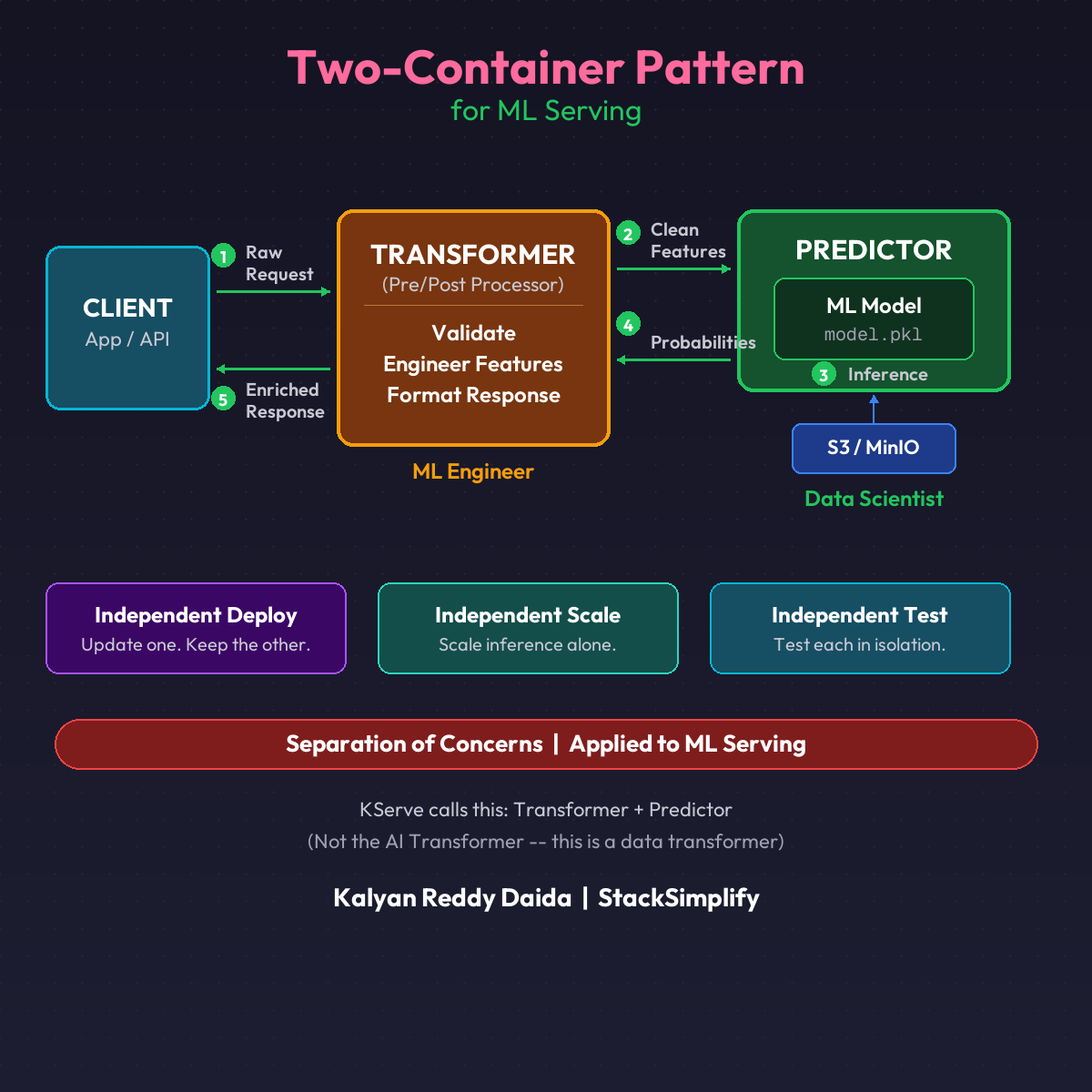

The Two-Container Pattern: Transformer + Predictor for ML Serving

Your ML model expects clean features. Your API receives raw data. The two-container pattern with KServe solves this with clear separation of concerns.

Your ML model expects clean features. Your API receives raw data. Where does the preprocessing live?

Every team gets this wrong the first time. They stuff everything into one container: data validation, feature engineering, ML inference, output formatting. It works. Until it doesn’t.

The Problem with One Container

Model retrained? Rebuild the whole container. Feature logic changed? Rebuild the whole container. Need to scale inference independently? Everything scales together. Or breaks together.

One change. Full redeploy. Every time.

The Fix: Two Containers

| Container | Responsibility | Owned By |

|---|---|---|

| Transformer | Validate inputs, engineer features, format output | ML Engineer |

| Predictor | Load model, run inference, return raw scores | Data Scientist |

(Not the AI Transformer. This is a data transformer in KServe.)

The Full Flow

- Client sends raw request to Transformer

- Transformer validates and engineers features

- Clean features passed to Predictor

- Predictor runs inference, returns probabilities

- Transformer adds business labels, formats response

- Enriched response back to client

Round trip. Two containers. Neither knows the other’s internals.

Why This Changes Everything

- Model retrained? Only the Predictor redeploys

- Feature logic changed? Only the Transformer redeploys

- Scale inference? Scale the Predictor alone

- Debug preprocessing? Test the Transformer in isolation

Independent lifecycles. Independent scaling. Independent testing. One YAML file. Two containers. KServe handles routing automatically.

When to Use This Pattern

Not every model needs two containers. Here’s when the split makes sense:

| Scenario | Pattern |

|---|---|

| Simple model, minimal preprocessing | Single container is fine |

| Complex feature engineering | Two containers |

| Model and preprocessing change at different rates | Two containers |

| Need to scale inference independently | Two containers |

| Multiple models share the same preprocessing | Two containers (shared Transformer) |

Start simple. Split when the single container becomes a deployment bottleneck.

The DevOps Parallel

If you’ve built microservices, you already know this principle. The sidecar pattern in Kubernetes is the same idea: two containers in one pod, each with a clear responsibility.

Separation of concerns. Applied to ML serving.

This is Part 7 of the MLOps for DevOps Engineers series. Next: Scale-to-Zero for ML models.

For weekly updates, join the newsletter.